| Issue |

EPJ Appl. Metamat.

Volume 13, 2026

|

|

|---|---|---|

| Article Number | 12 | |

| Number of page(s) | 8 | |

| DOI | https://doi.org/10.1051/epjam/2025009 | |

| Published online | 13 May 2026 | |

https://doi.org/10.1051/epjam/2025009

Original Article

Study of simulation model of diffractive neural network in liquid crystal optical environments

School of Artificial Intelligence Science and Technology, University of Shanghai for Science and Technology, Shanghai 200093,

PR China

* e-mail: This email address is being protected from spambots. You need JavaScript enabled to view it.

Received:

9

October

2025

Accepted:

17

November

2025

Published online: 13 May 2026

Abstract

The optical environment plays an important role in modulation of nanophotonics devices, which potentially would make it possible in development of tunable optical diffractive neural network (ODNN), which would significantly progress the capability in machine learning tasks. However, most of the current research on ODNN is focused on passive nanostructures in atmospheric environments, which only gives a fixed optical response of learning, while limited discussion on the performance has been reported in other tunable spatial environments. In this paper, model of the training and testing effects of ODNN in different optical environments, especially in birefringent nematic liquid crystal based on the Rayleigh-Sommerfeld diffraction is systematically investigated and analyzed. ODNN models are trained in air, water and liquid crystal environments at the visible light wavelength of 532 nm. The results indicate that ODNN is sensitive to changes in the testing environment, and the inference capability of the network degrades as the deviation between the testing environment and training conditions becomes significant. Different learning tasks can be carried out by tuning of the optical environment in device. Since liquid crystal is a widely used electronic material tunable under different external physics conditions, these results provide a starting point to introduce more effective dimensions of optical learning in a tunable ODNN electronic device.

Key words: Tunable optical diffractive neural network / Metasurface / multi-task machine learning / liquid crystal

© X. Yang et al., Published by EDP Sciences, 2026

This is an Open Access article distributed under the terms of the Creative Commons Attribution License (https://creativecommons.org/licenses/by/4.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

This is an Open Access article distributed under the terms of the Creative Commons Attribution License (https://creativecommons.org/licenses/by/4.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

1 Introduction

Traditional artificial intelligence relies on electronics for computation, but its signal processing speed and energy consumption are constrained by the limitations of von Neumann-based computing hardware. Consequently, optical computing, which utilizes photons instead of electrons for computation, has gained significant importance [1]. Optical Diffractive Neural Network (ODNN) is a recent innovation born from the integration of optics and deep learning [2]. It has been demonstrated as an effective implementation for optically trained networks, offering advantages such as light-speed transmission, low power consumption, and high parallel processing capabilities. By leveraging the interaction between light and matter, ODNN models the diffraction propagation of light and wavefront modulation as neural connections and weighted linear summation in neural networks, thereby enabling optical artificial intelligence functionalities. Physical experiments on ODNN have been reported, and research on expanding its functionalities, such as imaging [3], optical logic gate operations [4,5], image reconstruction [6], spectral imaging [7], bidirectional focusing lenses [8], multiplexing and demultiplexing [9,10], and holography [11], has also been published.

Currently, most ODNN research focuses on long wavelengths (such as terahertz and gigahertz), large-scale structures, and limited learning capability with untunable diffractive devices. In the field of photonic information processing, there is a particular need to focus on processing shorter wavelengths like near-infrared and visible light [12]. For ODNN, learning by shorter wavelength lights means the smaller physical dimensions of diffractive elements, which plays a crucial role in achieving structural miniaturization for device integration. At the same time, when the diffractive elements of machine learning device become tunable, it can be used to serve in the reconfigurable ODNN. Currently, the research primarily explores methods to alter the nano atom structure of diffraction layers to achieve reconfigurability in ODNN, such as programmable metasurfaces atoms [13], magneto-optical materials [14] etc. Another method can be potentially applied involves reconfiguring phase modulation in ODNN by modifying the optical environment. For example, liquid crystal-based reconfigurable metasurfaces can adjust the effective dielectric constant by varying the DC voltage applied to microstrip patches on liquid crystal cells, thereby tuning the phase difference at different positions on the metasurface. [15–17] Considering the principles of ODNN, changes in the optical environment can exert impact on the diffraction transmission and wavefront modulation. However, almost all current ODNN research primarily focuses on atmospheric environments, even less the studies of optical machine learning modulated by other optical environments.

In this paper, model of ODNN to realize different machine learning tasks by tuning of optical environment is proposed and systematically investigated, including the impact of the optical environment of diffraction on ODNN performance at visible light of 532 nm. The diffractive optical environment is simulation and training is set from air to liquid crystal media by modulating the optical refractive indices. This study aims to explore a new possibility for reconfigurable multi-task ODNN, where tasks can be switched by altering the optical environment of the ODNN, which can be seen as a starting point of study to develop tunable ODNN devices.

2 Material and methods

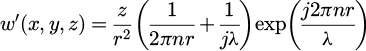

The diffractive neural network is an artificial optical neural network whose hidden layers are physically composed of several diffractive surfaces. As shown in Figure 1, each pixel unit on the diffractive surface can be seen as an optical diffractive neuron. According to the Huygens–Fresnel principle, each neuron can be considered as a secondary source of waves. The neurons between layers are interconnected via secondary waves through optical diffraction, which can be represented by the following equation:

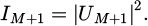

(1)

(1)

in which z is the separation between two adjacent layers,  , λ is the wavelength of incident light, and n is the refractive index of the optical environment. So, the output from the ith neuron from the pixel (xi, yi, zi) of the lth layer can be expressed as:

, λ is the wavelength of incident light, and n is the refractive index of the optical environment. So, the output from the ith neuron from the pixel (xi, yi, zi) of the lth layer can be expressed as:

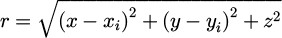

(2)

(2)

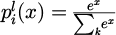

in which  is the complex transmitted coefficient, while

is the complex transmitted coefficient, while  is the amplitude coefficient, and

is the amplitude coefficient, and  is the coefficient of phase. For a pure phase ODNN framework, the

is the coefficient of phase. For a pure phase ODNN framework, the  can be seen as constant, e.g. set as 1 for ideal situation. k is the number of neurons of the (l-1)th layer. So,

can be seen as constant, e.g. set as 1 for ideal situation. k is the number of neurons of the (l-1)th layer. So,  shows a superposition of secondary waves from all the neurons of the previous layer, which forms a full connection of neural network.

shows a superposition of secondary waves from all the neurons of the previous layer, which forms a full connection of neural network.

Through optical diffraction, the output light from the previous layer can be transmitted as the input light for the next layer. The diffractive layers modulate the light, which then propagates forward and is output. Assuming there are M diffractive layers, the light intensity distribution at the output plane is as follows:

(3)

(3)

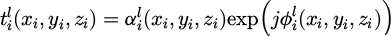

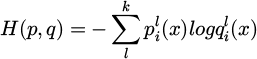

Deep learning tasks can be executed based on the output light intensity distribution. In the handwritten digit classification task, ten non-overlapping detection areas are defined on the output plane, and the optical signal intensity in each area is measured. Each detection area corresponds to one digit, and the classification criterion is to identify the area with the highest optical signal intensity. The prediction result from the forward propagation output plane is compared with the training objective of the diffractive network, and the resulting error is backpropagated through the layers. The parameters of each network layer are iteratively updated using stochastic gradient descent. A softmax function is applied before the output layer to highlight the region with the highest light intensity. This setup is solely used to enhance gradient flow during training and does not affect the training intensity. The cross-entropy function is employed as the loss function, which significantly improves the accuracy of handwritten digit classification. The cross-entropy function is defined as:

(4)

(4)

in which  is the output of softmax layer, while

is the output of softmax layer, while  is the real value. Images from the MNIST dataset (60,000 training images and 10,000 test images) are used as inputs to train the network for digit classification, with phase modulation constrained to the range of (0, 2π).

is the real value. Images from the MNIST dataset (60,000 training images and 10,000 test images) are used as inputs to train the network for digit classification, with phase modulation constrained to the range of (0, 2π).

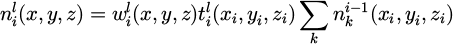

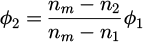

Here, we consider the impact of the spatial environment on the performance of the network model. As shown in Figure 2, the optical environment is defined by the refractive index near the nano structure pixels. Different environments primarily alter the refractive index of the space, and changes in the spatial refractive index mainly affect the optical diffractive neural network in two aspects. First, the diffraction transmission between layers is influenced, as understood from equation (1), which describes the effect of refractive index on diffraction. Second, the phase modulation of the diffractive layer changes. When a trained diffractive phase plate Φ1, obtained in a specific spatial environment with refractive index n1, is placed in a new testing environment with refractive index n2, the phase modulation parameters shift as follows:

(5)

(5)

where nm is the refractive index of the material used to fabricate the neurons. Under changes in both inter-layer diffraction and phase modulation, the performance of the optical diffractive neural network will consequently vary.

The LC material parameters applied for ODNN learning were referred to the vendor's specifications (Merck Co., Ltd.) of nematic E7 product, characterized by a strong birefringence between the extraordinary and ordinary refractive indices at room temperature (∆n = ne - no ≈ 0.2). The incidence was set normal to the nanostructure' surface with the polarization parallel to the x-axis direction. The optical environment was modulated by switching the alignment angle of liquid crystal molecules from OFF state, i.e. the incident polarization was initially aligned parallel with the slow axis of extraordinary refractive index, i.e. x-axis, to the ON state, i.e. the LC cells were set upward, with an ordinary refractive index for the incident polarization. The phase modulation response of liquid crystal is previously simulated by Lumerical FDTD before introducing to the diffractive neural network training. The learning results were trained by changing the alignment status of the LC molecules to simulate the control of LC based tunable device.

|

Figure 1 The structural diagram of the optical diffractive neural network in the optical environment. |

|

Figure 2 The sketch of principle of diffractive neural network modulable by optical environment. The inset at the right side shows the switch of tasks by control of liquid crystal molecules near the neuron pixels between vertical and horizontal alignments. |

3 Results and discussion

The impact of the number of layers and neuron density on the performance of the optical diffractive neural network (ODNN) was firstly analyzed to select appropriate network parameters for further study. Using the handwritten digit classification task as the inference task for the ODNN, the commonly used MNIST dataset (consisting of 60,000 training images and 10,000 test images) serves as the training data for the diffractive neural network. The network parameters are updated via error backpropagation and the stochastic gradient descent algorithm. After 20 epochs of iterative training, the digit classification accuracy is output. Initially, the diffractive neural network is configured with each layer containing 100 × 100 neurons, a feature size of 1.6 μm for the diffractive neurons, and an inter-layer diffraction distance of 400 μm. Under an operating wavelength of λ ∼ 532 nm (visible light), the variation in digit classification accuracy with changes in the number of network layers is shown in Figure 3a. The classification accuracies for 1 to 5 layers are 94.19%, 94.86%, 94.81%, 94.91%, and 94.74%, respectively. As the number of layers increases, the network's performance improves, demonstrating the advantage of depth. However, when the number of layers is further increased, the performance saturates, with only minor fluctuations in classification accuracy. Notably, even a single-layer network achieves a high classification accuracy of 94.19%. The accuracy is determined based on the intensity ratio defined as the ratio between the intensity of light within the targeted region with respect to the total in all designed labels' regions. The higher intensity ratio in the targeted region means more light will be diffracted to this region, i.e. high probability of correct learning of DNN. Subsequently, taking a single-layer network as an example, the variation in classification accuracy with different neuron densities is analyzed, as shown in Figure 3b. The performance is optimal when the diffractive layer contains 100 × 100 neurons. Therefore, this paper primarily designs a diffractive neural network with a single layer of 100 × 100 neurons.

The diffractive neural network is trained in an air environment first for both binary classification and ten-class classification tasks to analyze the impact of task complexity. Networks with different numbers of layers (one, two, and five layers) are designed for comparison. The training results are shown in Figure 3. Figures 4a and 4e show the diffractive phase plates obtained from training the single-layer ODNN on the binary and ten-class classification tasks, respectively. When incident light encoded with the handwritten digit “0” is input into the ODNN, the proportion of optical signal intensity in the designated detection regions is obtained for both tasks. Figures 4b and 4f show the output optical field distributions for the binary and ten-class classification tasks, respectively. The red boxes indicate the detection regions corresponding to the classification labels. The optical signal intensity in the detection region corresponding to the digit “0” is significantly higher than in other regions. The bar charts showing the energy proportion in each detection region are presented in Figures 4c and 4g, indicating correct identification by the ODNN. Meanwhile, as the number of network layers increases, the binary classification task maintains 100% classification accuracy and high energy proportion, while the ten-class classification task shows a slight improvement in both classification accuracy and energy proportion, as detailed in Table 1. This demonstrates that the performance of the diffractive training is satisfactory even with a single-layer diffractive structure. Moreover, similar results can be obtained in an aqueous environment, with the binary classification task still achieving 100% classification accuracy, while the ten-class classification task achieves an accuracy of 85.77%, which is slightly lower than the performance in the air environment, likely due to light scattering in the aqueous environment.

Next, we take the single-layer diffractive neural network as an example, and the model previously trained in air is subsequently tested in an aqueous environment. Under the condition of a refractive index difference of Δn ∼ 0.33 between the two environments, the phase modulation of the diffractive layers in the network also changes. When incident light encoded with the same handwritten digit “0” is input, the energy distribution across the detection regions becomes highly uniform for both the binary and ten-class classification networks, as shown in Figures 4d and 4h. The energy proportion in the detection region corresponding to digit “0” drops from 96.92% to 41.28% for the binary classification task, and from 41.32% to 7.652% for the ten-class task. Concurrently, the classification accuracy of the binary task decreases to 61.65%, while that of the ten-class task drops to 10.78%. Similarly, when a model trained in the aqueous environment is tested in air, the classification accuracy of the binary task drops from 100% to 59.86%, and the ten-class task accuracy falls to 7.89%. These results clearly demonstrate that the optical diffractive neural network loses its original inference capability when the testing environment does not match the training conditions.

To further investigate the adaptability of the optical diffractive neural network to testing environments, this study uses air and aqueous environments as examples. Binary and ten-class classification networks were trained in each environment, and their performance was subsequently tested in environments with refractive indices ranging from n = 1 to 1.8. As shown in Figure 5, panels (a) and (b) present the results of binary classification networks trained in air and aqueous environments, respectively, tested across different environments. Similarly, panels (c) and (d) show the results for ten-class classification networks trained in air and aqueous environments under varying testing conditions. Analysis reveals that each network model achieves optimal digit classification accuracy when tested under its respective training conditions. However, as the refractive index of the testing environment deviates from that of the training environment, the classification accuracy gradually decreases. As the deviation between the refractive index of the testing environment and that of the training environment gradually increases, the inference performance of the entire network model progressively declines until it diminishes entirely. When the task performed by the optical diffractive neural network shifts from binary classification to ten-class classification (i.e., task complexity increases), the network's adaptability to the testing environment decreases. This is evident from the effective testing environment range within which the network maintains reliable inference performance. For the binary classification network model trained in air, its performance is considered suboptimal when the digit classification accuracy drops below 70%. At this point, the permissible deviation in the refractive index of the testing environment is approximately Δn ∼ 0.28. In contrast, for the ten-class classification network, performance is deemed suboptimal when the classification accuracy falls below 50%, with a corresponding permissible refractive index deviation of only Δn ∼ 0.2. Furthermore, network models trained in high-refractive-index environments exhibit greater sensitivity to changes in the testing environment, resulting in a narrower effective testing environment range. For instance, the permissible refractive index deviations for binary and ten-class classification networks trained in an aqueous environment are Δn ∼ 0.2 and Δn ∼ 0.1, respectively.

It is important to consider the material used for fabricating the diffractive layers in the experiments. This study uses photoresist with a refractive index of nm = 1.55 as the sample material. When the refractive index of the testing environment matches that of the diffractive layer material (nm = n2), the network loses its inference performance regardless of the training conditions. This can be explained by the phase shift reduction described in equation (5) when nm = n2. On the other hand, the effective testing environment range of the optical diffractive neural network shrinks as the number of network layers increases. For example, when the number of layers increases from one to five, the permissible refractive index deviation narrows from Δn ∼ 0.1 to Δn ∼ 0.04. This is because the increased network complexity amplifies the impact of the optical environment on multi-layer diffraction modulation, leading to a more pronounced environmental response.

Next, we consider to use the ODNN to realize both the digital recognition and parity classification by switching the LC alignment. The diffractive pixels' parameters were first trained with LC environment with horizontal alignment (OFF state) for digital recognition and vertical alignment (ON state) for parity classification. As shown in Figures 6a and 6b, the sample were first trained and prepared for each task only and test for hand writing digit. The accuracy can be expected to about 77.03% for 10-digit classification and 91.34% for the parity classification between oven and odd for the input hand writing digit 1. To explore the possibility of two-task switchable ODNN by control of LC, a combined sample is prepared by cross arranging the pixels of both tasks previously trained. As shown in Figures 6c and 6d, the digits can be still correctly recognized for both digit and parity properties. The accuracies were decreased to 64.25% for digit recognition and 88.26% for parity classification. It can be attributed to the crosstalk of optical diffraction when the two structure were combined on the same ODNN structure.

In summary, the influence of the testing environment on the inference performance of optical diffractive neural networks intensifies with increasing network complexity. The LC can be used to switch the training task between digit recognition and parity classification, which shows a correct result. This phenomenon provides a new degree of freedom for ODNN design. By accounting for the impact of the optical environment during the training phase, it is possible to achieve satisfactory inference performance for optical diffractive neural networks in arbitrary environments. This approach holds promise for achieving reconfigurability in optical diffractive neural networks, especially in LC devices.

|

Fig. 3 The variation in numerical classification accuracy of the network under different parameters. (a) Layer number, (b) diffractive neuronal arrays. |

|

Fig. 4 Binary classification tasks; (a) diffractive phase plates, (b) output intensity, (c) energy proportion in air environment testing, (d) energy proportion in aqueous environment testing, (e)–(h) ten classification tasks. |

ODNN test in free space with different layers.

|

Figure 5 Binary classification network model tested in refractive index n = 1∼1.8 environment (a) train in air, (b) train in aqueous; ten classification network model tested in refractive index n = 1∼1.8 environment (c) train in air, (d) train in aqueous. |

|

Fig. 6 Comparison of LC modulable ODNN based on hand writing digit: (a) digital recognition and (b) parity classification task tested under horizontal and vertical LC alignment environments for digit 1. (c) Digital recognition tested for a two-task combined sample and (d) comparison of parity classification between digit 1 and 8 for the combined sample. |

4 Conclusion

Based on the Rayleigh-Sommerfeld diffraction theory, this study establishes an optical diffractive neural network. Models trained in air and aqueous environments for binary and ten-class classification tasks accurately perform inference tasks. Subsequently, these models were tested in environments with different refractive indices to evaluate performance changes. The inference capability of the network declines as the deviation between the testing and training environments increases, maintaining qualified performance only within a specific deviation range. Furthermore, the study concludes that the network exhibits higher sensitivity to the testing environment as the complexity of both the inference tasks and the network itself increases. Due to the responsiveness of optical diffractive neural networks to testing environments, an additional degree of freedom can be incorporated into their design. This perspective may guide future development of multi-task optical diffractive neural networks. Additionally, this research is significant for applying optical diffractive neural networks in image detection within other fields, such as biomedical applications. Leveraging this characteristic, future work could potentially expand the functionality of optical diffractive neural networks to areas such as solution concentration detection.

Funding

The Science and Technology Commission of Shanghai Municipality (Grant No. 21DZ1100500), the Shanghai Municipal Science and Technology Major Project, the Shanghai Frontiers Science Center Program (2021-2025 No. 20), the National Key Research and Development Program of China (Grant No. 2021YFB2802000), and the National Natural Science Foundation of China (Grant No. 62205209).

Conflicts of interest

The authors declare no conflicts of interest.

Data availability statement

Data underlying the results presented in this paper are not publicly available at this time but may be obtained from the authors upon reasonable request.

Author contribution statement

Conceptualization, supervision and Funding acquisition: Mingyu Sun.Investigation and writing: Xu Yang and Li Fang.Data collection and data curation: Bowei Wu.

References

- Y. Shen, N.C. Harris, S. Skirlo et al., Deep Learning with Coherent Nanophotonic Circuits, Nat. Photon. 11, 441 (2017) https://doi.org/10.1038/nphoton.2017.93 [Google Scholar]

- X. Lin, , Y. Rivenson, N.T. Yardimci, et al., All-Optical Machine Learning Using Diffractive Deep Neural Networks, Science 361, 1004 (2018) https://doi.org/10.1126/science.aat8084 [Google Scholar]

- D. Liao, K.F. Chan, C.H. Chan, et al., All-Optical Diffractive Neural Networked Terahertz Hologram, Optics Lett. 45, 2906 (2020) https://doi.org/10.1364/OL.394046 [Google Scholar]

- C. Qian, X. Lin, X. Lin, et al., Performing Optical Logic Operations by a Diffractive Neural Network, Light Sci. Appl. 9, 59 (2020) https://doi.org/10.1038/s41377-020-0303-2 [Google Scholar]

- Y. Luo, D. Mengu and A., Ozcan, Cascadable All-Optical NAND Gates Using Diffractive Networks, Sci. Rep. 12, 7121 (2022) https://doi.org/10.1038/s41598-022-11331-4 [Google Scholar]

- D. Mengu, M. Veli, Y. Rivenson, et al., Classification and Reconstruction of Spatially Overlapping Phase Images Using Diffractive Optical Networks, Sci. Rep. 12, 8446 (2022) https://doi.org/10.1038/s41598-022-12020-y [Google Scholar]

- D. Mengu, A. Tabassum, M. Jarrahi et al., Snapshot Multispectral Imaging Using a Diffractive Opti cal Network, Light Sci. Appl. 12, 86 (2023) https://doi.org/10.1038/s41377-023-01135-0 [Google Scholar]

- W. Jia, D. Lin, R. Menon et al., Machine Learning Enables the Design of a Bidirectional Focusing Diffractive Lens, Optics Lett. 48, 2425 (2023) https://doi.org/10.1364/OL.489535 [Google Scholar]

- Y. Luo, D. Mengu, N.T. Yardimci et al., Design of Task-Specific Optical Systems Using Broadband Diffractive Neural Networks, Light Sci. Appl. 8, 112 (2019) https://doi.org/10.1038/s41377-019-0223-1 [Google Scholar]

- P. Wang, W. Xiong, Z. Huang et al., Diffractive Deep Neural Network for Optical Orbital Angular Momentum Multiplexing and Demultiplexing, IEEE J. Selected Topics Quantum Electron. 28, 7500111 (2022) https://doi.org/10.1109/JSTQE.2021.3077907 [Google Scholar]

- M.S. Sakib Rahman, A. Ozcan, Computer-Free, All-Optical Reconstruction of Holograms Using Diffractive Networks, ACS Photon. 8, 3375 (2021) https://doi.org/10.1021/acsphotonics.1c01365 [Google Scholar]

- H. Chen, J. Feng, M. Jiang et al., Diffractive Deep Neural Networks at Visible Wavelengths, Engineering 7, 1483 (2021) https://doi.org/10.1016/j.eng.2020.07.032 [Google Scholar]

- X. Luo, Y. Hu, X. Ou et al., Metasurface-Enabled on-Chip Multiplexed Diffractive Neural Networks in the Visible, Light Sci. Appl. 11, 158 (2022) https://doi.org/10.1038/s41377-022-00844-2 [Google Scholar]

- T. Fujita, H. Sakaguchi, J. Zhang et al., Magneto-Optical Diffractive Deep Neural Network, Optics Express 30, 36889 (2022) https://doi.org/10.1364/OE.470513 [Google Scholar]

- M. Sun, X. Xu, X.W. Sun et al., Efficient Visible Light Modulation Based on Electrically Tunable All Dielectric Metasurfaces Embedded in Thin-Layer Nematic Liquid Crystals, Sci. Rep. 9, 8673 (2019) https://doi.org/10.1038/s41598-019-45091-5 [Google Scholar]

- Y.Q. Hu, X.N. Ou, T.B. Zeng et al. Electrically Tunable Multifunctional Polarization-Dependent Metasurfaces Integrated with Liquid Crystals in the Visible Region, NANO Lett. 21, 4554 (2021) https://doi.org/10.1021/acs.nanolett.1c00104 [Google Scholar]

- S.Q. Li, X.W. Xu, R.M. Veetil et al., Phase-Only Transmissive Spatial Light Modulator Based on Tunable Dielectric Metasurface, Science 364, 1087 (2019) https://doi.org/10.1126/science.aaw6747 [Google Scholar]

Cite this article as: Xu Yang, Li Fang, Bowei Wu, Mingyu Sun, Study of simulation model of diffractive neural network in liquid crystal optical environments, EPJ Appl. Metamat. 13, 12 (2026), https://doi.org/10.1051/epjam/2025009

All Tables

All Figures

|

Figure 1 The structural diagram of the optical diffractive neural network in the optical environment. |

| In the text | |

|

Figure 2 The sketch of principle of diffractive neural network modulable by optical environment. The inset at the right side shows the switch of tasks by control of liquid crystal molecules near the neuron pixels between vertical and horizontal alignments. |

| In the text | |

|

Fig. 3 The variation in numerical classification accuracy of the network under different parameters. (a) Layer number, (b) diffractive neuronal arrays. |

| In the text | |

|

Fig. 4 Binary classification tasks; (a) diffractive phase plates, (b) output intensity, (c) energy proportion in air environment testing, (d) energy proportion in aqueous environment testing, (e)–(h) ten classification tasks. |

| In the text | |

|

Figure 5 Binary classification network model tested in refractive index n = 1∼1.8 environment (a) train in air, (b) train in aqueous; ten classification network model tested in refractive index n = 1∼1.8 environment (c) train in air, (d) train in aqueous. |

| In the text | |

|

Fig. 6 Comparison of LC modulable ODNN based on hand writing digit: (a) digital recognition and (b) parity classification task tested under horizontal and vertical LC alignment environments for digit 1. (c) Digital recognition tested for a two-task combined sample and (d) comparison of parity classification between digit 1 and 8 for the combined sample. |

| In the text | |

Current usage metrics show cumulative count of Article Views (full-text article views including HTML views, PDF and ePub downloads, according to the available data) and Abstracts Views on Vision4Press platform.

Data correspond to usage on the plateform after 2015. The current usage metrics is available 48-96 hours after online publication and is updated daily on week days.

Initial download of the metrics may take a while.